publications

* indicates equal contribution.

2026

-

Controllability in preference-conditioned multi-objective reinforcement learningSubmitted to International Conference on Neuro-Symbolic Systems (NeuS), under review , 2026

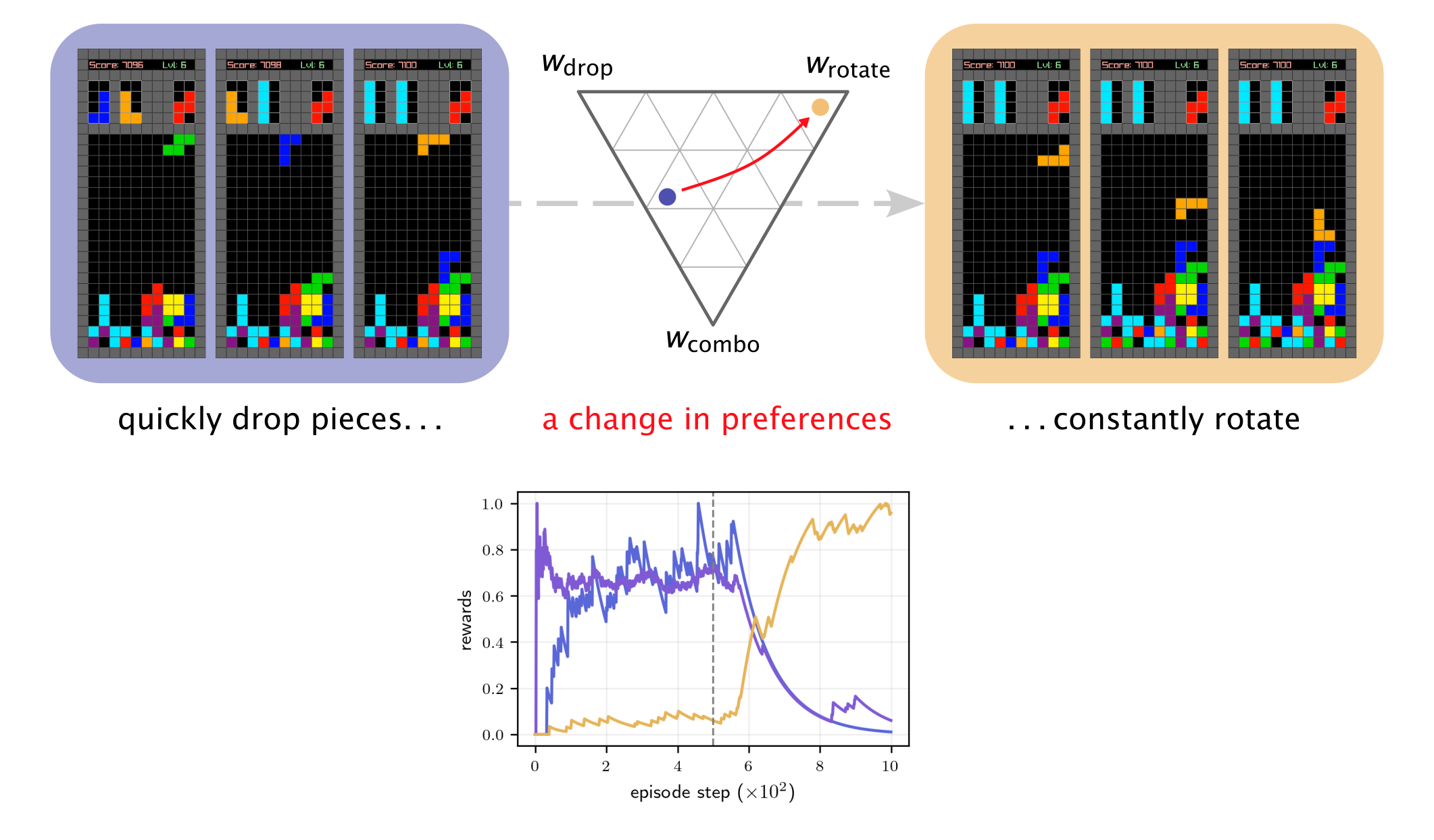

Controllability in preference-conditioned multi-objective reinforcement learningSubmitted to International Conference on Neuro-Symbolic Systems (NeuS), under review , 2026Multi-objective reinforcement learning (MORL) allows a user to express preference over outcomes in terms of the relative importance of the objectives, but standard metrics cannot capture whether changes in preference reliably change the agent’s behavior in the intended way, a property termed controllability. As a result, preference-conditioned agents can score well on standard MORL metrics while being insensitive to the preference input. If we can’t reliably assess our ability to control agents, the symbolic interface that MORL provides between user intent and agent behavior is broken. In this work, we show how mainstream MORL metrics fail to measure the controllability of preference-conditioned agents and present a novel alternative based on rank correlation metrics. We hope the results spur discussion in the community on existing evaluation protocols to consolidate advances in preference adaptation in MORL to larger and more complex problems.

@misc{molins2026controllability, title = {Controllability in preference-conditioned multi-objective reinforcement learning}, author = {{de las Heras Molins}, P. and Yalcinkaya, B. and Peters, L. and Fridovich-Keil, D. and Bakirtzis, G.}, year = {2026}, }

2025

-

Approximate solutions to games of ordered preferenceP. de las Heras Molins*, E. Roy-Almonacid*, D. H. Lee, L. Peters, D. Fridovich-Keil, and G. BakirtzisAccepted at IEEE International Conference on Intelligent Transportation Systems (ITSC) , 2025

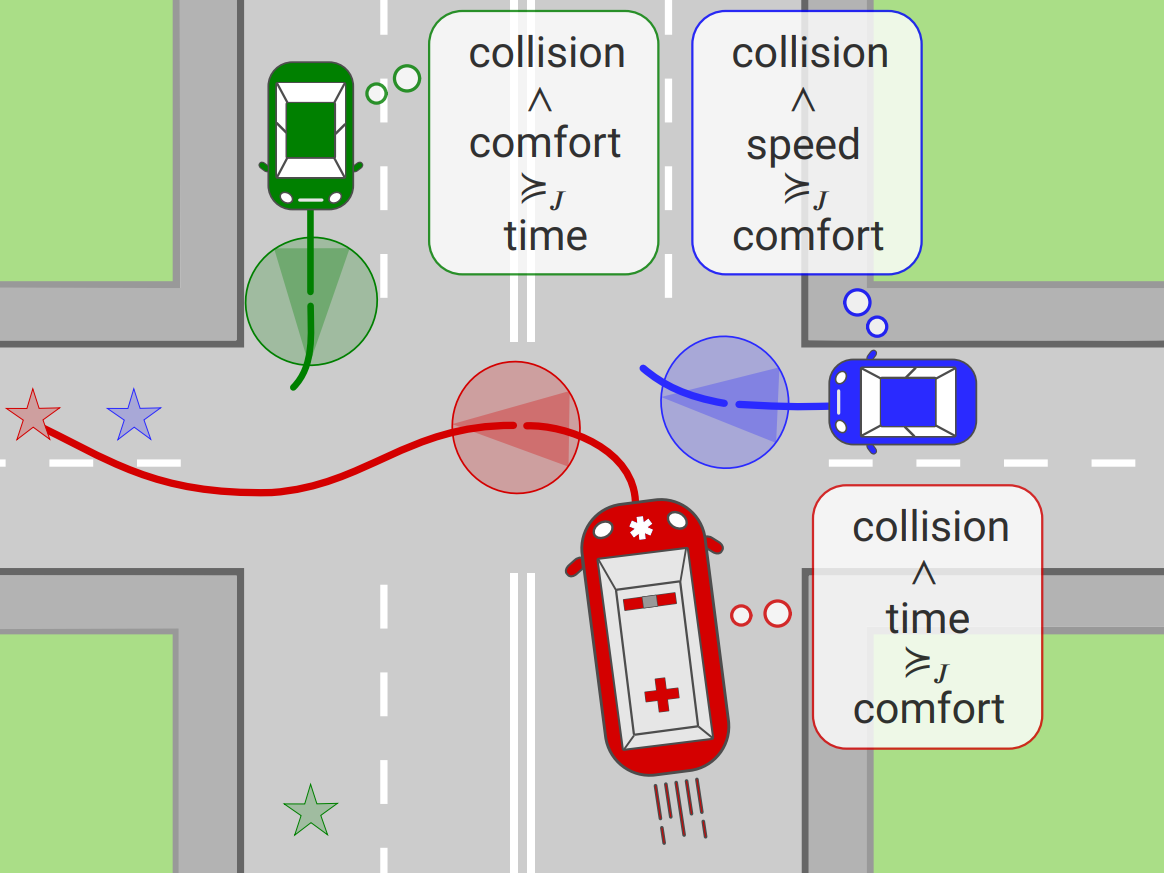

Approximate solutions to games of ordered preferenceP. de las Heras Molins*, E. Roy-Almonacid*, D. H. Lee, L. Peters, D. Fridovich-Keil, and G. BakirtzisAccepted at IEEE International Conference on Intelligent Transportation Systems (ITSC) , 2025Autonomous vehicles must balance ranked objectives, such as minimizing travel time, ensuring safety, and coordinating with traffic. Games of ordered preference effectively model these interactions but become computationally intractable as the time horizon, number of players, or number of preference levels increase. While receding horizon frameworks mitigate long-horizon intractability by solving sequential shorter games, often warm-started, they do not resolve the complexity growth inherent in existing methods for solving games of ordered preference. This paper introduces a solution strategy that avoids excessive complexity growth by approximating solutions using lexicographic iterated best response (IBR) in receding horizon, termed "lexicographic IBR over time." Lexicographic IBR over time uses past information to accelerate convergence. We demonstrate through simulated traffic scenarios that lexicographic IBR over time efficiently computes approximate-optimal solutions for receding horizon games of ordered preference, converging towards generalized Nash equilibria.

@misc{molins2025approximatesolutionsgamesordered, title = {Approximate solutions to games of ordered preference}, author = {{de las Heras Molins}, P. and Roy-Almonacid, E. and Lee, D. H. and Peters, L. and Fridovich-Keil, D. and Bakirtzis, G.}, year = {2025}, eprint = {2507.11021}, archiveprefix = {arXiv}, primaryclass = {eess.SY}, url = {https://arxiv.org/abs/2507.11021}, }